Paperclip is one of the clearest attempts yet to answer a question many AI builders quietly run into after the novelty wears off: what happens when you no longer have one agent, but ten, twenty, or fifty?

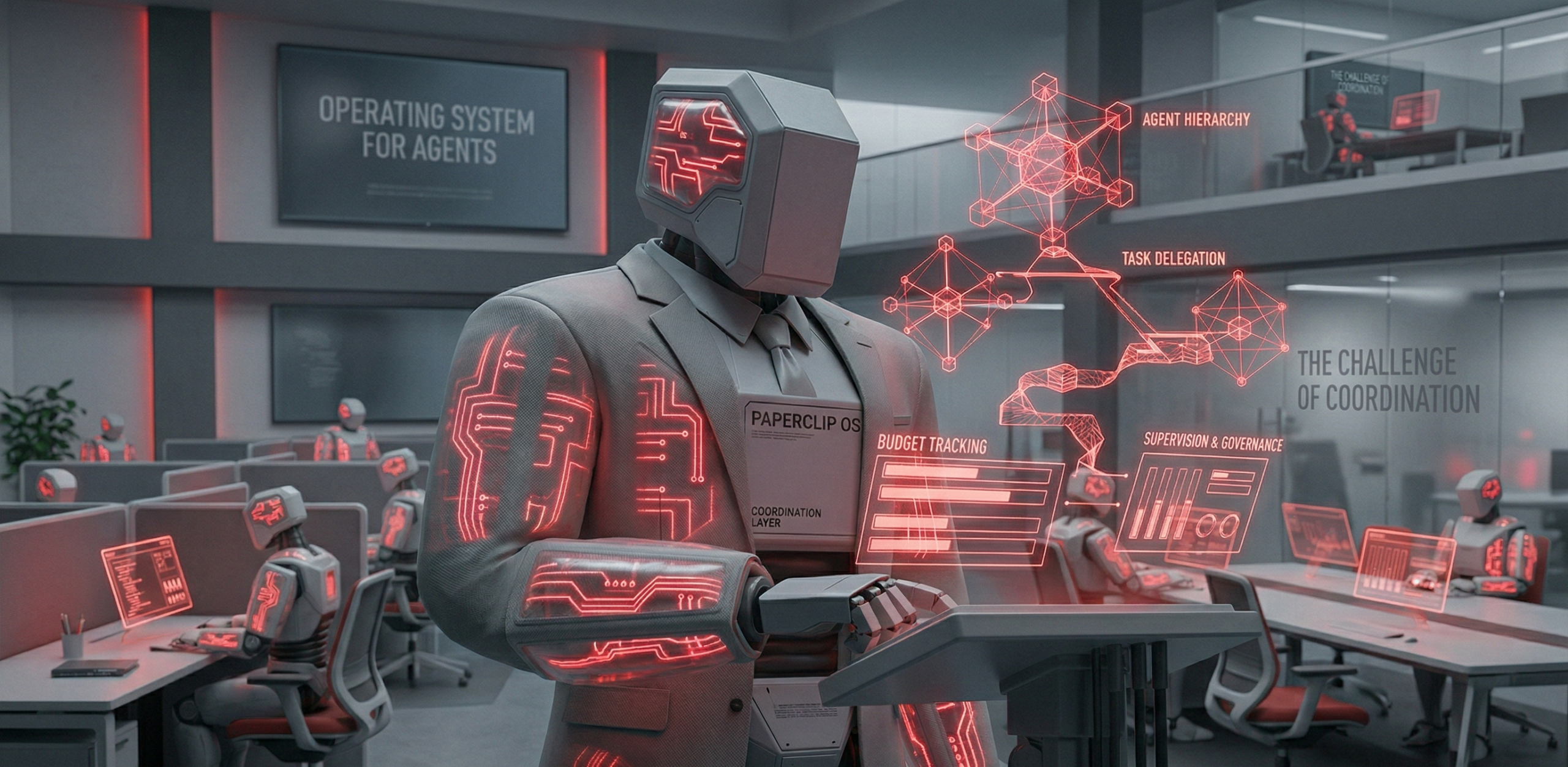

The repo, available at paperclipai/paperclip, is not trying to become another chatbot shell or agent framework. Its pitch is sharper than that. If tools like OpenClaw, Claude Code, Codex, or Cursor act as individual workers, Paperclip positions itself as the company layer above them — the operating system for assigning goals, defining hierarchy, tracking budgets, and keeping autonomous work from turning into chaos.

That framing matters. Most teams experimenting with AI automation eventually discover that the hard part is not generating text or running code. The hard part is coordination. Which agent owns what? How does context persist? Who approves risky actions? What happens when costs spike? How do you supervise recurring work without babysitting terminals all day?

Paperclip is built around exactly those questions.

What Paperclip actually is

At a technical level, Paperclip is an open-source Node.js server with a React UI. Locally, it can run with an embedded PostgreSQL setup, which lowers the barrier for trying it without standing up a full production stack first. For production, it can point to your own Postgres instance and be deployed more traditionally.

The product model is more interesting than the stack, though. Paperclip treats AI workers as organizational actors rather than isolated bots. You define goals, create company structures, assign roles, track spending, review work through tickets, and let agents wake up on heartbeats or events to do their jobs.

In other words, it replaces the common pile of agent scripts plus memory plus cron plus dashboards plus approval hacks with a more opinionated control plane.

The big idea: from prompting tools to managing an AI org

The repo’s most compelling insight is that multi-agent systems break down when they are treated as a collection of tools instead of a coordinated business system.

That is why Paperclip introduces concepts that sound less like developer tooling and more like operating management:

- Org charts so agents have reporting lines and roles

- Goals and task ancestry so work stays aligned with business intent

- Heartbeats so agents wake up on schedule and continue persistent work

- Budgets so token burn is visible and constrained

- Governance so approvals, overrides, and human control are explicit

- Ticket-based communication so each task has an owner, a thread, and an audit trail

- Multi-company isolation so one deployment can manage separate ventures safely

This is a subtle but important design decision. Plenty of AI stacks can trigger an agent. Far fewer can answer, with confidence, why an agent is doing a task, who approved it, how much it is costing, and what broader goal it serves.

Why the repo feels timely

Paperclip arrives at a moment when AI operations are maturing from demos into real internal systems.

A solo founder might start with one coding agent and one content agent. Very quickly, that expands into a mess: one session handles support replies, another drafts blog posts, another runs SEO checks, another patches code, another monitors analytics, and another updates docs. Suddenly the limiting factor is no longer model capability. It is management overhead.

That is where Paperclip’s value proposition becomes practical instead of theoretical.

Rather than asking teams to hand-roll orchestration from Trello boards, shell scripts, chat tabs, and fragmented memory, it gives them a native model for AI work: work is assigned through tickets, agents operate inside a hierarchy, execution is tied to budgets, sessions persist across reboots, recurring work is driven by heartbeats, and everything is traced.

Real-world use cases where Paperclip makes sense

1. A software studio with specialized AI roles

A small engineering team can use Paperclip to structure AI workers the same way it structures humans.

One agent can act as CTO-level planner, another as implementation engineer, another as QA analyst, and another as documentation owner. Instead of manually copying context between tools, each task inherits its purpose from a project and company goal. The result is not just better continuity, but better prioritization.

This matters when the business objective is more important than the individual coding task. Fix the webhook bug becomes part of improve onboarding conversion, not a disconnected line item.

2. A content and marketing operation that runs on schedules

Paperclip looks particularly useful for content-heavy businesses.

Imagine a content company with agents for keyword research, article drafting, editing, newsletter preparation, social copy generation, and performance review. Those jobs are naturally cyclical, deadline-driven, and cross-functional. Heartbeats give those agents a cadence. Budgets prevent experiments from turning into silent token sinks. Governance gives a human editor the final say on publication.

That combination is much more realistic than expecting a single mega-agent to be strategist, writer, editor, analyst, and scheduler at once.

3. A portfolio operator running several ventures

One of the repo’s stronger architectural claims is multi-company isolation.

That makes Paperclip attractive for operators who manage several AI-assisted products, agencies, or internal experiments at the same time. Instead of spinning up an entirely separate orchestration stack for each venture, they can run one deployment with isolated companies, separate audit trails, distinct budgets, and different org structures.

For agencies, holding companies, or builders running multiple niche products, that is a serious operational advantage.

4. Any setup where cost control and auditability matter

A lot of AI automation breaks not because the outputs are bad, but because the economics are invisible until they hurt.

Paperclip’s budgeting model directly targets that failure mode. Per-agent monthly budgets, warnings, and stop conditions are the kind of simple controls that become essential the moment autonomous jobs run long enough to surprise you.

The audit story matters too. If an AI operation is handling customer support, growth experiments, or production-adjacent engineering work, the ability to trace conversations, approvals, tool calls, and decision paths stops being optional.

What stands out technically

From the repo and docs, several implementation choices stand out.

First, Paperclip is runtime-agnostic by design. It does not force one preferred model vendor or one specific agent runtime. The system is built to work with OpenClaw, Claude Code, Codex, Cursor, shell-based agents, and HTTP-driven integrations. That is a smart decision because most serious teams are already heterogeneous.

Second, persistent state across heartbeats is more important than it sounds. Many naive agent systems restart work from scratch on each trigger, which creates repeated context loading, brittle behavior, and needless cost. Paperclip appears to treat continuity as a first-class concern.

Third, atomic execution and budget enforcement suggest the authors understand the ugly edge cases of orchestration: double assignment, race conditions, runaway loops, and cost drift. Those are not flashy features, but they are exactly the details that separate a toy multi-agent demo from something teams might actually trust.

Fourth, governance with rollback is a strong signal that the project is thinking beyond simple task execution. If you are going to let AI workers operate semi-autonomously, then approvals, revision history, and safe rollback are not extras. They are the control surface.

Where Paperclip fits in the current AI tooling landscape

Paperclip is not competing directly with coding agents, and that is probably why it feels fresh.

The repo explicitly argues that it is not a chatbot, an agent framework, a drag-and-drop workflow builder, a prompt manager, or a pull-request review tool.

That positioning is useful because it gives Paperclip a clearer lane.

If you only have one AI assistant helping with scattered tasks, Paperclip is probably overkill. But if you are already stitching together agents, scheduled jobs, role-specific prompts, manual oversight, and cost monitoring, then the project starts to look less like overhead and more like missing infrastructure.

A good way to think about it is this: many tools help you operate an agent. Paperclip is trying to help you operate an organization made of agents.

Practical adoption advice

If you want to evaluate Paperclip seriously, do not start by modeling a futuristic zero-human company. That marketing phrase is memorable, but the practical value shows up earlier.

A better pilot is to use it in one business function where work is already repetitive, structured, and reviewable.

Good starting points include:

- content production pipelines

- research and reporting teams

- internal dev task coordination

- lead qualification and follow-up workflows

- lightweight operations support for a solo founder

In these cases, Paperclip can serve as the layer that coordinates tasks, preserves context, controls spend, and keeps humans in the approval loop.

That is the real-world path to adoption: not replacing the company overnight, but reducing orchestration overhead in the places where AI workers already create management friction.

The limitations to keep in mind

Paperclip’s ambition is also its biggest challenge.

Any system that tries to model org structures, budgets, tickets, governance, and heterogeneous agent runtimes is taking on significant complexity. Teams will still need to design good agent roles, define sensible boundaries, write strong skills and prompts, and decide which actions require human approval.

Paperclip does not eliminate bad management. It gives you a structure for better management.

That distinction matters. The repo makes a strong case that agent orchestration is a different problem from agent creation. But it also means success with Paperclip will depend less on whether the UI is polished and more on whether operators can build reliable organizational habits around it.

Final verdict

Paperclip is one of the more interesting open-source AI repos to watch because it attacks a problem many teams already feel but few tools address well: the operational mess that appears once AI work becomes continuous, distributed, and expensive.

Its strongest idea is not any single feature. It is the decision to treat AI systems as a managed company rather than a collection of clever automations.

That makes the repo especially relevant for founders, operators, technical marketers, dev agencies, and autonomous-business experimenters who are already past the single-agent stage. If your current setup involves scattered terminals, recurring scripts, too many tabs, vague ownership, and weak cost visibility, Paperclip offers a far more coherent model.

It is still early, and the roadmap shows plenty left to build. But the direction is right. In a market crowded with tools that help you talk to AI, Paperclip stands out by helping you organize AI work like an actual business.